View a PDF of the paper titled Improving Medical Visual Representations via Radiology Report Generation, by Keegan Quigley and 6 other authors

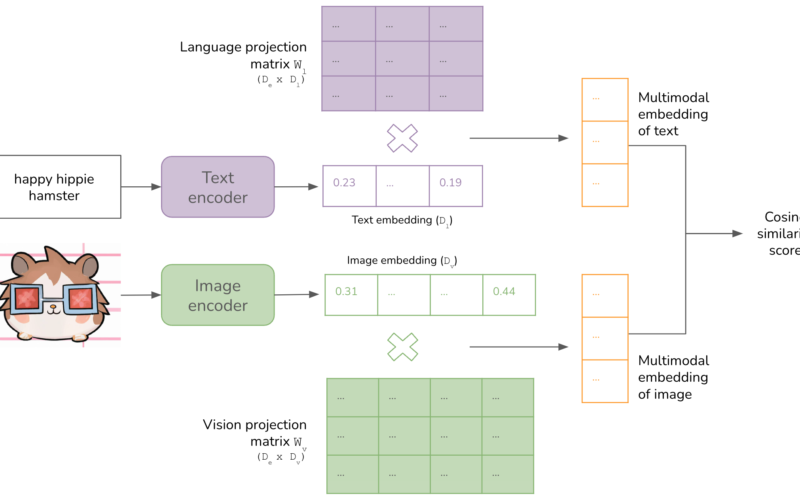

Abstract:Vision-language pretraining has been shown to produce high-quality visual encoders which transfer efficiently to downstream computer vision tasks. Contrastive learning approaches have increasingly been adopted for medical vision language pretraining (MVLP), yet recent developments in generative AI offer new modeling alternatives. This paper introduces RadTex, a CNN-encoder transformer-decoder architecture optimized for radiology. We explore bidirectional captioning as an alternative MVLP strategy and demonstrate that RadTex’s captioning pretraining is competitive with established contrastive methods, achieving a CheXpert macro-AUC of 89.4%. Additionally, RadTex’s lightweight text decoder not only generates clinically relevant radiology reports (macro-F1 score of 0.349), but also provides targeted, interactive responses, highlighting the utility of bidirectional captioning in advancing medical image analysis.

Submission history

From: Keegan Quigley [view email]

[v1]

Mon, 30 Oct 2023 15:25:29 UTC (2,275 KB)

[v2]

Fri, 10 Jan 2025 16:51:33 UTC (1,349 KB)

Source link

lol