Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

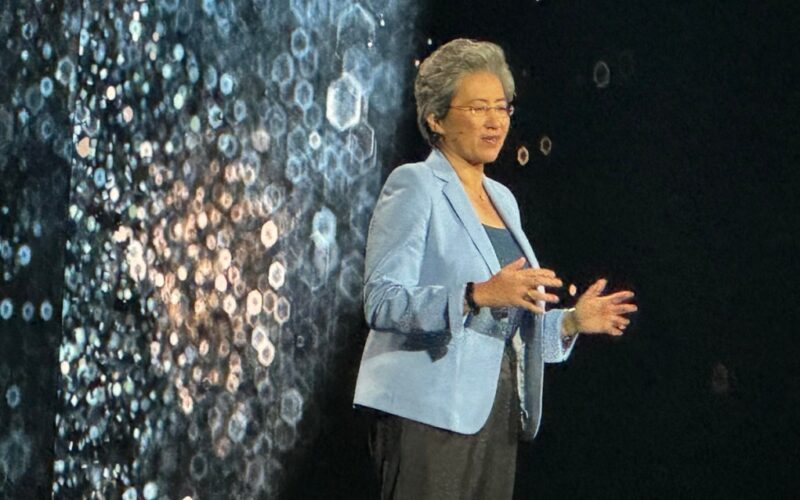

Speaking at an event in San Francisco, AMD CEO Lisa Su unveiled AI-infused chips across the company’s Ryzen, Instinct and Epyc brands, fueling a new generation of AI computing for everyone from business users to data centers.

Throughout the event, AMD indirectly made references to rivals such as Nvidia and Intel by emphasizing its quest to provide technology that was open and accessible to the widest variety of customers, without an intent to lock those customers into proprietary solutions.

Su said AI will boost our personal productivity, collaboration will become much better with things like real-time translate, and it will make life easier whether you are a creator or ordinary user. It will be processed locally, to protect your privacy, Su said. She noted the new AMD Ryzen AI Pro PCs will be CoPilot+-ready and offer up to 23 hours of battery life (and nine hours using Microsoft Teams).

“We’ve been working very closely with AI PC ecosystem developers,” she said, noting more than 100 will be working on AI apps by the end of the year.

Commercial AI mobile Ryzen processors

AMD announced its third generation commercial AI mobile processors, designed specifically to transform business productivity with Copilot+ features including live captioning and language translation in conference calls and advanced AI image generators. If you really wanted to, you could use AI-based Microsoft Teams for up to nine hours on new laptops equipped with the AMD processors.

The new Ryzen AI PRO 300 Series processors deliver industry-leading AI compute, with up to three times the AI performance than the previous generation of AMD processors. More than 100 products using the Ryzen processors are on the way through 2025.

Enabled with AMD PRO Technologies, the Ryzen AI PRO 300 Series processors offer high security and manageability features designed to streamline IT operations and ensure exceptional ROI for businesses.

Ryzen AI PRO 300 Series processors feature new AMD Zen 5 architecture, delivering outstanding CPU performance, and are the world’s best line up of commercial processors for Copilot+ enterprise PCs5. Zen, now in its fifth generation, has been the foundation behind AMD’s own financial recovery, its gains in market share against Intel, and Intel’s own subsequent hard times and layoffs.

“I think the best is that AMD continue to execute on a solid product roadmap. Unfortunately they are making performance comparisons to the competition’s previous generation products,” said Jim McGregor, an analyst at Tirias Research, in an email to VentureBeat. “So, we have to wait and see how the products will compare. However, I do expect them to be highly competitive especially the processors. Note that AMD only announced a new architecture for nenetworking, everything else is evolutionary but that’s not a bad thing when you are in a strong position and gaining market share.”

Laptops equipped with Ryzen AI PRO 300 Series processors are designed to tackle business’ toughest workloads, with the top-of-stack Ryzen AI 9 HX PRO 375 offering up to 40% higher performance and up to 14% faster productivity performance compared to Intel’s Core Ultra 7 165U, AMD said.

With the addition of XDNA 2 architecture powering the integrated NPU (the neural processing unit, or AI-focused part of the processor), AMD Ryzen AI PRO 300 Series processors offer a cutting-edge 50+ NPU TOPS (Trillions of Operations Per Second) of AI processing power, exceeding Microsoft’s Copilot+ AI PC requirements and delivering exceptional AI compute and productivity capabilities for the modern business.

Built on a 4 nanometer (nm) process and with innovative power management, the new processors deliver extended battery life ideal for sustained performance and productivity on the go.

“Enterprises are increasingly demanding more compute power and efficiency to drive their everyday tasks and most taxing workloads. We are excited to add the Ryzen AI PRO 300 Series, the most powerful AI processor built for business PCs10 , to our portfolio of mobile processors,” said Jack Huynh, senior vice president and general manager of the computing and graphics group at AMD, in a statement. “Our third generation AI-enabled processors for business PCs deliver unprecedented AI processing capabilities with incredible battery life and seamless compatibility for the applications users depend on.”

AMD expands commercial OEM ecosystem

OEM partners continue to expand their commercial offerings with new PCs powered by Ryzen AI PRO 300 Series processors, delivering well-rounded performance and compatibility to their business customers. With industry leading TOPS, the next generation of Ryzen processor-powered commercial PCs are set to expand the possibilities of local AI processing with Microsoft Copilot+. OEM systems powered by Ryzen AI PRO 300 Series are expected to be on shelf starting later this year.

“Microsoft’s partnership with AMD and the integration of Ryzen AI PRO processors into Copilot+ PCs demonstrate our joint focus on delivering impactful AI-driven experiences for our customers. The Ryzen AI PRO’s performance, combined with the latest features in Windows 11, enhances productivity, efficiency, and security,” said Pavan Davuluri, corporate vice president for Windows+ Devices at Microsoft, in a statement. “Features like Improved Windows Search, Recall, and Click to Do make PCs more intuitive and responsive. Security enhancements, including the Microsoft Pluton security processor and Windows Hello Enhanced Sign-in Security, help safeguard customer data with advanced protection. We’re proud of our strong history of collaboration with AMD and are thrilled to bring these innovations to market.”

“In today’s AI-powered era of computing, HP is dedicated to delivering powerful innovation and performance that revolutionizes the way people work,” said Alex Cho, president of Personal Systems at HP, in a statement. “With the HP EliteBook X Next-Gen AI PC, we are empowering modern leaders to push boundaries without compromising power or performance. We are proud to expand our AI PC lineup powered by AMD, providing our commercial customers with a truly personalized experience.”

“Lenovo’s partnership with AMD continues to drive AI PC innovation and deliver supreme performance for our business customers. Our recently announced ThinkPad T14s Gen 6 AMD, powered by the latest AMD Ryzen AI PRO 300 Series processors, showcases the strength of our collaboration,” said Luca Rossi, president, Lenovo Intelligent Devices Group. “This device offers outstanding AI computing power, enhanced security, and exceptional battery life, providing professionals with the tools they need to maximize productivity and efficiency. Together with AMD, we are transforming the business landscape by delivering smarter, AIdriven solutions that empower users to achieve more.”

New Pro Technologies features for security and management

In addition to AMD Secure Processor, AMD Shadow Stack and AMD Platform Secure Boot, AMD has expanded its Pro Technologies lineup with new security and manageability features.

Processors equipped with PRO Technologies will now come standard with Cloud Bare Metal Recovery, allowing IT teams to seamlessly recover systems via the cloud ensuring smooth and continuous operations; Supply Chain Security (AMD Device Identity), a new supply chain security function, enabling traceability across the supply chain; and Watch Dog Timer, building on existing resiliency support with additional detection and recovery processes.

Additional AI-based malware detection is available via PRO Technologies with select ISV partners. These new security features leverage the integrated NPU to run AI-based security workloads without impacting day-to-day performance.

AMD unveils Instinct MI325X accelerators for AI data centers

AMD has become a big player in the graphics processing units (GPUs) for data centers, and today it announced the latest AI accelerators and networking solutions for AI infrastructure.

The company unveiled the AMD Instinct MI325X accelerators, the AMD Pensando Pollara 400

network interface card (NIC) and the AMD Pensando Salina data processing unit (DPU).

AMD claimed the AMD Instinct MI325X accelerators set a new standard in performance for Gen AI models and data centers. Built on the AMD CDNA 3 architecture, AMD Instinct MI325X accelerators are designed for performance and efficiency for demanding AI tasks spanning foundation model training, fine-tuning and inferencing.

Together, these products enable AMD customers and partners to create highly performant and optimized AI solutions at the system, rack and data center level.

“AMD continues to deliver on our roadmap, offering customers the performance they need and the choice they want, to bring AI infrastructure, at scale, to market faster,” said Forrest Norrod, executive vice president and general manager of the data center solutions business group at AMD, in a statement. “With the new AMD Instinct accelerators, EPYC processors and AMD Pensando networking engines, the continued growth of our open software ecosystem, and the ability to tie this all together into optimized AI infrastructure, AMD underscores the critical expertise to build and deploy world class AI solutions.”

AMD Instinct MI325X accelerators deliver industry-leading memory capacity and bandwidth, with 256GB of HBM3E supporting 6.0TB/s offering 1.8 times more capacity and 1.3 times more bandwidth than the Nvidia H200, AMD said. The AMD Instinct MI325X also offers 1.3 times greater peak theoretical FP16 and FP8 compute performance compared to H200.

This leadership memory and compute can provide up to 1.3 times the inference performance on Mistral 7B at FP162, 1.2 times the inference performance on Llama 3.1 70B at FP83 and 1.4 times the inference performance on Mixtral 8x7B at FP16 of the H200. (Nvidia has more recent devices on the market now and they are not yet available for comparisons, AMD said).

“AMD certainly remains well positioned in the data center, but I think their CPU efforts are still their best positioned products. The market for AI accelleration/GPUs is still heavily favoring Nvidia and I don’t see that changing anytime soon. But the need for well optimized and purpose designed CPUs to compliment as a host processor any AI accelerator or GPU is essential and AMDs datacenter CPUs are competitive there,” said Ben Bajarin, an analyst at Creative Strategies, in an email to VentureBeat. “On the networking front, there is certainly good progress here technically and I imagine the more AMD can integrate this into their full stack approach to optimizing for the racks via the ZT systems purchase, then I think their networking stuff becomes even more important.”

He added, “Broad point to make here, is the data center is under a complete transformation and we are still only in the early days of that which makes this still a wide open competitive field over the arc of time 10+ years. I’m not sure we can say with any certainty how this shakes out over that time but the bottom line is there is a lot of market share and $$ to go around to keep AMD, Nvidia, and Intel busy.”

AMD Instinct MI325X accelerators are currently on track for production shipments in Q4 2024 and are expected to have widespread system availability from a broad set of platform providers, including Dell Technologies, Eviden, Gigabyte, Hewlett Packard Enterprise, Lenovo, Supermicro and others starting in Q1 2025.

Updating its annual roadmap, AMD previewed the next-generation AMD Instinct MI350 series accelerators. Based on the AMD CDNA 4 architecture, AMD Instinct MI350 series accelerators are designed to deliver a 35 times improvement in inference performance compared to AMD CDNA 3-based accelerators.

The AMD Instinct MI350 series will continue to drive memory capacity leadership with up to 288GB of HBM3E memory per accelerator. The AMD Instinct MI350 series accelerators are on track to be available during the second half of 2025.

“AMD undoubtedly increased the distance between itself and Intel with Epyc. It currently has 50-60% market share with the hyoerscalers and I don’t see that abating. AMD;’s biggest challenge is to get share with enterprises. Best product rarely wins in the enterprise and AMD needs to invest more into sales and marketing to accelerate its enterprise growth,” said Patrick Moorhead, an analyst at Moor Insights & Strategy, in an email to VentureBeat. “It’s s bit harder to assess where AMD sits versus NVIDIA in Datacenter GPUs. There’s numbers flying all around, claims from both companies that they’re better. Signal65, our sister benchmarking company, hasn’t had the opportunity to do our own tests.”

And Moohead added, “What I can unequivocally say is that AMD’s new GPUs, particularly the MI350, is a massive improvement given improved efficiency, performance and better support for lower bit rate models than its predecessors. It is a two horse race, with Nvidia in the big lead and AMD is quickly catching up and providing meaningful results. The facts that Meta’s live llama 405B model runs exclusively on MI is a huge statement on competitiveness. “

AMD next-gen AI Networking

AMD is leveraging the most widely deployed programmable DPU for hyperscalers to power next-gen AI networking, said Soni Jiandani, senior vice president of the network technology solutions group, in a press briefing.

Split into two parts: the front-end, which delivers data and information to an AI cluster, and the backend, which manages data transfer between accelerators and clusters, AI networking is critical to ensuring CPUs and accelerators are utilized efficiently in AI infrastructure.

To effectively manage these two networks and drive high performance, scalability and efficiency across the entire system, AMD introduced the AMD Pensando Salina DPU for the front-end and the AMD Pensando Pollara 400, the industry’s first Ultra Ethernet Consortium (UEC) ready AI NIC, for the back-end.

The AMD Pensando Salina DPU is the third generation of the world’s most performant and programmable DPU, bringing up to two times the performance, bandwidth and scale compared to the previous generation.

Supporting 400G throughput for fast data transfer rates, the AMD Pensando Salina DPU is a critical component in AI front-end network clusters, optimizing performance, efficiency, security and scalability for data-driven AI applications.

The UEC-ready AMD Pensando Pollara 400, powered by the AMD P4 Programmable engine, is the industry’s first UEC-ready AI NIC. It supports the next-gen RDMA software and is backed by an open ecosystem of networking. The AMD Pensando Pollara 400 is critical for providing leadership performance, scalability and efficiency of accelerator-to-accelerator communication in back-end networks.

Both the AMD Pensando Salina DPU and AMD Pensando Pollara 400 are sampling with customers in Q4’24 and are on track for availability in the first half of 2025.

AMD AI software for Generative AI

AMD continues its investment in driving software capabilities and the open ecosystem to deliver powerful new features and capabilities in the AMD ROCm open software stack.

Within the open software community, AMD is driving support for AMD compute engines in the most widely used AI frameworks, libraries and models including PyTorch, Triton, Hugging Face and many others. This work translates to out-of-the-box performance and support with AMD Instinct accelerators on popular generative AI models like Stable Diffusion 3, Meta Llama 3, 3.1 and 3.2 and more than one million models at Hugging Face.

Beyond the community, AMD continues to advance its ROCm open software stack, bringing the latest features to support leading training and inference on Generative AI workloads. ROCm 6.2 now includes support for critical AI features like FP8 datatype, Flash Attention 3, Kernel Fusion and more. With these new additions, ROCm 6.2, compared to ROCm 6.0, provides up to a 2.4X performance improvement on inference6 and 1.8X on training for a variety of LLMs.

AMD launches 5th Gen AMD Epyc CPUs for the data center

AMD also announced the availability of the 5th Gen AMD Epyc processors, formerly codenamed “Turin,” the “world’s best server CPU for enterprise, AI and cloud,” the company said.

Using the Zen 5 core architecture, compatible with the broadly deployed SP5 platform and offering a broad range of core counts spanning from eight to 192, the AMD Epyc 9005 Series processors extend the record-breaking performance and energy efficiency of the previous generations with the top of stack 192 core CPU delivering up to 2.7 times the performance compared to the competition, AMD said.

New to the AMD Epyc 9005 Series CPUs is the 64 core AMD Epyc 9575F, tailor made for GPU-powered AI solutions that need the ultimate in host CPU capabilities. Boosting up to 5GHz, compared to the 3.8GHz processor of the competition, it provides up to 28% faster processing needed to keep GPUs fed with data for demanding AI workloads, AMD said.

“From powering the world’s fastest supercomputers, to leading enterprises, to the largest Hyperscalers, AMD has earned the trust of customers who value demonstrated performance, innovation and energy efficiency,” said Dan McNamara, senior vice president and general manager of the server business at AMD, in a statement. “With five generations of on-time roadmap execution, AMD has proven it can meet the needs of the data center market and give customers the standard for data center performance, efficiency, solutions and capabilities for cloud, enterprise and AI workloads.”

In a press briefing, McNamara thanked Zen for AMD’s server market share rise from zero in 2017 to 34% in the second quarter of 2024 (according to Mercury Research).

Modern data centers run a variety of workloads, from supporting corporate AI-enablement initiatives, to powering large-scale cloud-based infrastructures to hosting the most demanding business-critical applications. The new 5th Gen AMD Epyc processors provide leading performance and capabilities for the broad spectrum of server workloads driving business IT today.

“This is a beast,” McNamara said. “We are really excited about it.”

The new Zen 5 core architecture, provides up to 17% better instructions per clock (IPC) for enterprise and cloud workloads and up to 37% higher IPC in AI and high performance computing (HPC) compared to Zen 4.

With AMD Epyc 9965 processor-based servers, customers can expect significant impact in their real world applications and workloads compared to the Intel Xeon 8592+ CPU-based servers, with: up to four times faster time to results on business applications such as video transcoding.

AMD said it also has up to 3.9 times the time to insights for science and HPC applications that solve the

world’s most challenging problems; up to 1.6 times the performance per core in virtualized infrastructure.

In addition to leadership performance and efficiency in general purpose workloads, the 5th Gen

AMD Epyc processors enable customers to drive fast time to insights and deployments for AI

deployments, whether they are running a CPU or a CPU + GPU solution, McNamara said.

Compared to the competition, he said the 192 core Epyc 9965 CPU has up to 3.7 times the performance on end-to-end AI workloads, like TPCx-AI (derivative), which are critical for driving an efficient approach to generative AI.

In small and medium size enterprise-class generative AI models, like Meta’s Llama 3.1-8B, the Epyc 9965 provides 1.9 times the throughput performance compared to the competition.

Finally, the purpose built AI host node CPU, the EPYC 9575F, can use its 5GHz max frequency boost to help a 1,000 node AI cluster drive up to 700,000 more inference tokens per second. Accomplishing more, faster.

By modernizing to a data center powered by these new processors to achieve 391,000 units of SPECrate2017_int_base general purpose computing performance, customers receive impressive performance for various workloads, while gaining the ability to use an estimated 71% less power and ~87% fewer servers. This gives CIOs the flexibility to either benefit from the space and power savings or add performance for day-to-day IT tasks while delivering impressive AI performance.

The entire lineup of 5th Gen AMD EPYC processors is available today, with support from Cisco, Dell, Hewlett Packard Enterprise, Lenovo and Supermicro as well as all major ODMs and cloud service providers providing a simple upgrade path for organizations seeking compute and AI leadership.

Dell said that said its 16-accelerated PowerEdge servers would be able to replace seven prior generation servers, with a 65% reduction of energy usage. HP Enterprise also took the stage to say Lumi, one of its customers, is working on a digital twin of the entire planet, dubbed Destination Earth, using the AMD tech.

Daniel Newman, CEO of The Futurum Group and an analyst, said in an email to VentureBeat, “Instinct and the new MI325X will be the hot button from today’s event. It isn’t a completely new launch, but the Q4 ramp will run alongside nvidia Blackwell and will be the next important indicator of AMD’s trajectory as the most compelling competitor to Nvidia. The 325X ramping while the new 350 will be the biggest leap when it launches in 2H of 2025 making a 35 times AI performance leap from its CDNA3. “

Newman added, “Lisa Su’s declaration of a $500 billion AI accelerator market between 2023 and 2028 is an incredibly ambitious leap that represents more than 2x our current forecast and indicates a material upside for the market coming from a typically conservative CEO in Lisa Su. Other announcements in networking and compute (Turin) show the company’s continued expansion and growth.”

And he said, “The Epyc DC CPU business showed significant generational improvements. AMD has been incredibly successful in winning cloud datacenter business for its EPYC line now having more than 50% of share and in some cases we believe closer to 80%. For AMD, the big question is can it turn the strength in cloud and turn its attention to enterprise data center where Intel is still dominant–this could see AMD DC CPU business expand to more than its already largest ever 34%. Furthermore, can the company take advantage of its strength in cloud to win more DC GPU deals and fend off NVIDIA’s strength at more than 90% market share.”

Source link lol