[Submitted on 3 Jul 2024]

View a PDF of the paper titled Self-supervised Pretraining for Partial Differential Equations, by Varun Madhavan and Amal S Sebastian and Bharath Ramsundar and Venkatasubramanian Viswanathan

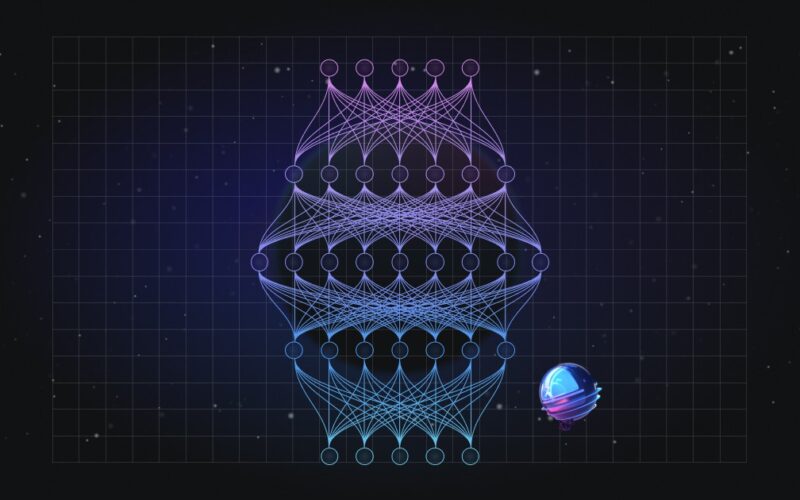

Abstract:In this work, we describe a novel approach to building a neural PDE solver leveraging recent advances in transformer based neural network architectures. Our model can provide solutions for different values of PDE parameters without any need for retraining the network. The training is carried out in a self-supervised manner, similar to pretraining approaches applied in language and vision tasks. We hypothesize that the model is in effect learning a family of operators (for multiple parameters) mapping the initial condition to the solution of the PDE at any future time step t. We compare this approach with the Fourier Neural Operator (FNO), and demonstrate that it can generalize over the space of PDE parameters, despite having a higher prediction error for individual parameter values compared to the FNO. We show that performance on a specific parameter can be improved by finetuning the model with very small amounts of data. We also demonstrate that the model scales with data as well as model size.

Submission history

From: Venkatasubramanian Viswanathan [view email]

[v1]

Wed, 3 Jul 2024 16:39:32 UTC (1,141 KB)

Source link

lol