In large language model (LLM) training, effective orchestration and compute resource management poses a significant challenge. Automation of resource provisioning, scaling, and workflow management is vital for optimizing resource usage and streamlining complex workflows, thereby achieving efficient deep learning training processes. Simplified orchestration enables researchers and practitioners to focus more on model experimentation, hyperparameter tuning, and data analysis, rather than dealing with cumbersome infrastructure management tasks. Straightforward orchestration also accelerates innovation, shortens time-to-market for new models and applications, and ultimately enhances the overall efficiency and effectiveness of LLM research and development endeavors.

This post explores the seamless integration of AWS Trainium with AWS Batch, showcasing how the powerful machine learning (ML) acceleration capabilities of Trainium can be harnessed alongside the efficient orchestration functionalities offered by AWS Batch. Trainium provides massive scalability, enables effortless scaling of training jobs from small models to LLMs, and offers cost-effective access to computational power, making training LLMs affordable and accessible. AWS Batch is a managed service facilitating batch computing workloads on the AWS Cloud, handling tasks like infrastructure management and job scheduling, while enabling you to focus on application development and result analysis. AWS Batch provides comprehensive features, including managed batch computing, containerized workloads, custom compute environments, and prioritized job queues, along with seamless integration with other AWS services.

Solution overview

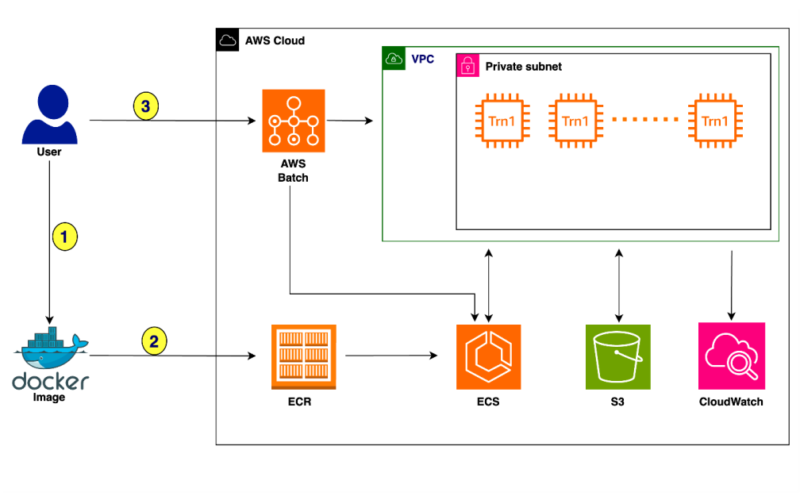

The following diagram illustrates the solution architecture.

The training process proceeds as follows:

- The user creates a Docker image configured to suit the demands of the underlying training task.

- The image is pushed to Amazon Elastic Container Registry (Amazon ECR) to make it ready for deployment.

- The user submits the training job to AWS Batch with the Docker image.

Let’s deep dive into this solution to see how you can integrate Trainium with AWS Batch. The following example demonstrates how to train the Llama 2-7B model using AWS Batch with Trainium.

Prerequisites

It is advised to not run the following scripts on your local machine. Instead, clone the GitHub repository and run the provided scripts on an x86_64-based instance, preferably using a C5.xlarge instance type with the Linux/Ubuntu operating system. For this post, we run the example on an Amazon Linux 2023 instance.

You should have the following resources and tools before getting started with the training on AWS Batch:

Clone the repo

Clone the GitHub repo and navigate to the required directory:

Update the configuration

First, update the config.txt file to specify values for the following variables:

After you provide these values, your config.txt file should look something like the following code

Get the Llama tokenizer

To tokenize the dataset, you would need to get the tokenizer from Hugging Face. Follow the instructions to access the Llama tokenizer. (You need to acknowledge and accept the license terms.) After you’re granted access, you can download the tokenizer from Hugging Face. After a successful download, place the tokenizer.model file in the root directory (llama2).

Set up Llama training

Run the setup.sh script, which streamlines the prerequisite steps for initiating the AWS Batch training. This script downloads the necessary Python files for training the Llama 2-7B model. Additionally, it performs environment variable substitution within the provided templates and scripts designed to establish AWS Batch resources. When it runs, it makes sure your directory structure conforms to the following setup:

Tokenize the dataset

Next, run the download_and_tokenize_data.sh script to complete the data preprocessing steps for Llama 2-7B training. In this instance, we use the wikicorpus dataset sourced from Hugging Face. After the dataset retrieval, the script performs tokenization and uploads the tokenized dataset to the predefined S3 location specified within the config.txt configuration file. The following screenshots show the preprocessing results.

Provision resources

Next, run the create_resources.sh script, which orchestrates the provisioning of the required resources for the training task. This includes creation of a placement group, launch template, compute environment, job queue, and job definition. The following screenshots illustrate this process.

Build and push the Docker image

Now you can run the script build_and_push_docker_image.sh, which constructs a Docker container image customized for your specific training task. This script uses a Deep Learning Container Image published by the Neuron team, which contains the required software stack, and then added instructions for running the Llama 2-7B training on top of it. The training script uses the neuronx_distributed library with tensor parallelism along with the ZeRO-1 Optimizer. Subsequently, the newly generated Docker container image is uploaded to your designated ECR repository as specified by the variable ECR_REPO in the configuration file config.txt.

If you want to modify any of the Llama training hyperparameters, make the required changes in ./docker/llama_batch_training.sh before running build_and_push_docker_image.sh.

The following screenshots illustrate the process for building and pushing the Docker image.

Submit the training job

Run the submit_batch_job.sh script to initiate the AWS Batch job and start the Llama2 model training, as shown in the following screenshots.

Upon batch job submission, an Amazon Elastic Container Service (Amazon ECS) cluster is dynamically provisioned. When it’s operational, you can navigate to the cluster to monitor all tasks actively running on the Trn1.32xl instances, launched through this job. By default, this example is configured to use 4 trn1.32xl instances. To customize this setting, you can modify the numNodes parameter in the submit_batch_job.sh script.

Logs and monitoring

After the job submission, you can use Amazon CloudWatch Logs for comprehensive monitoring, storage, and viewing of all logs generated by AWS Batch. Complete the following steps to access the logs:

- On the CloudWatch console, choose Log groups under Logs in the navigation pane.

- Choose

/aws/batch/jobto view the batch job logs. - Look for log groups that match your AWS Batch job names or job definitions.

- Choose the job to view its details.

The following screenshot shows an example.

Checkpoints

Checkpoints generated during training will be stored in the predefined S3 location specified as CHECKPOINT_SAVE_URI in the config.txt file. By default, the checkpoint is saved when training is complete. However, you can adjust this behavior by opting to save the checkpoint after every N steps within the training loop. For detailed instructions on this customization, refer to Checkpointing.

Clean up

When you’re done, run the cleanup.sh script to manage the removal of resources created during the post. This script takes care of removing various components, such as the launch template, placement group, job definition, job queue, and compute environment. AWS Batch automatically handles the cleanup of the ECS stack and Trainium instances, so there’s no need to manually remove or stop them.

Conclusion

The seamless integration of Trainium with AWS Batch represents a significant advancement in the realm of ML training. By combining the unparalleled capabilities of Trainium with the powerful orchestration functionalities of AWS Batch, you stand to benefit in numerous ways. Firstly, you gain access to massive scalability, with the ability to effortlessly scale training jobs from small models to LLMs. With up to 16 Trainium chips per instance and the potential for distributed training across tens of thousands of accelerators, you can tackle even the most demanding training tasks with ease by virtue of Trainium instances. Additionally, it offers a cost-effective solution, helping you harness the power you need at an appealing price point. With the fully managed service offered by AWS Batch for computing workloads, you can offload operational complexities such as infrastructure provisioning and job scheduling, allowing you to focus your efforts on building applications and analyzing results. Ultimately, the integration of Trainium with AWS Batch empowers you to accelerate innovation, shorten time-to-market for new models and applications, and enhance the overall efficiency and effectiveness of your ML endeavors.

Now that you have learned about orchestrating Trainium using AWS Batch, we encourage you to try it out for your next deep learning training job. You can explore more tutorials that will help you gain hands-on experience with AWS Batch and Trainium, and enable you to manage your deep learning training workloads and resources for better performance and cost-efficiency. So why wait? Start exploring these tutorials today and take your deep learning training to the next level with Trainium and AWS Batch!

About the authors

Scott Perry is a Solutions Architect on the Annapurna ML accelerator team at AWS. Based in Canada, he helps customers deploy and optimize deep learning training and inference workloads using AWS Inferentia and AWS Trainium. His interests include large language models, deep reinforcement learning, IoT, and genomics.

Scott Perry is a Solutions Architect on the Annapurna ML accelerator team at AWS. Based in Canada, he helps customers deploy and optimize deep learning training and inference workloads using AWS Inferentia and AWS Trainium. His interests include large language models, deep reinforcement learning, IoT, and genomics.

Sadaf Rasool is a Machine Learning Engineer with Annapurna ML Accelerator team at AWS. As an enthusiastic and optimistic AI/ML professional, he holds firm to the belief that the ethical and responsible application of AI has the potential to enhance society in the years to come, fostering both economic growth and social well-being.

Sadaf Rasool is a Machine Learning Engineer with Annapurna ML Accelerator team at AWS. As an enthusiastic and optimistic AI/ML professional, he holds firm to the belief that the ethical and responsible application of AI has the potential to enhance society in the years to come, fostering both economic growth and social well-being.

Source link

lol